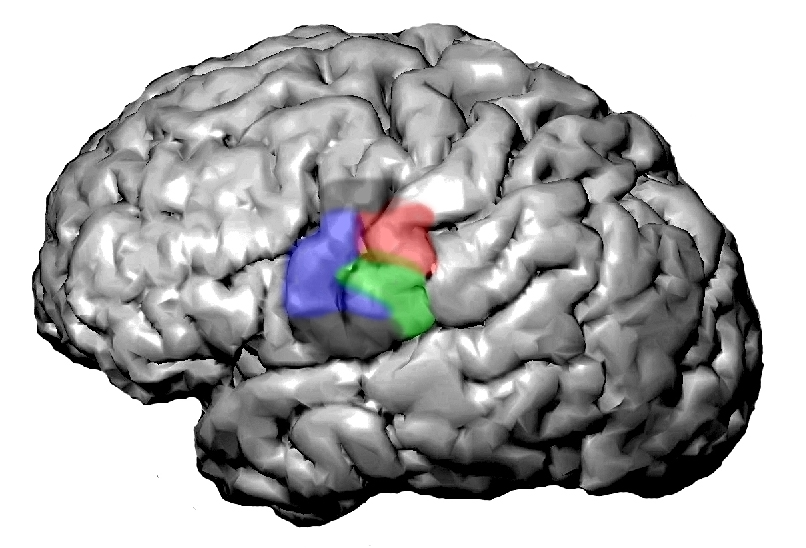

Deep learning is not a new concept in academic circles or behind the scenes at “Big Data” companies like Google and Facebook, where algorithms for automated pattern recognition are a fundamental part of the infrastructure. A collaborative effort at Berkeley Lab is working to apply deep learning software tools developed for high performance computing environments to a number of “grand challenge” science problems running computations at the National Energy Research Scientific Computing Center (NERSC) and other supercomputing facilities. Researchers in Berkeley Lab’s Biological Systems and Engineering Division, including Kris Bouchard, are using a deep learning library to analyze recordings of the human brain during speech production. More information about deep learning at NERSC.

Deep learning is not a new concept in academic circles or behind the scenes at “Big Data” companies like Google and Facebook, where algorithms for automated pattern recognition are a fundamental part of the infrastructure. A collaborative effort at Berkeley Lab is working to apply deep learning software tools developed for high performance computing environments to a number of “grand challenge” science problems running computations at the National Energy Research Scientific Computing Center (NERSC) and other supercomputing facilities. Researchers in Berkeley Lab’s Biological Systems and Engineering Division, including Kris Bouchard, are using a deep learning library to analyze recordings of the human brain during speech production. More information about deep learning at NERSC.